Hello, welcome to The Aggregate, the newsletter on the in depth analysis on topical yet unusual datasets and technical topics. If you want to sign up, a button to do that is below, or just read on!

The Weekly Explainer:

If you have either worked with data with addresses, there are data analysis tasks that require the coordinates of a given location, and not just the address to ask questions like:

What is the average distance from each address to each other, or to a specific point? Counting the total amount of data points per zip code, state, or boundary system you are using in a analysis.

Instead of Googling the address for each location, and copying and pasting the latitude/longitude coordinates, it is possible to automate this process, known as Geocoding

What is Geocoding

Geocoding is the process of converting an address into a set of latitude & longitude coordinates. Geocoding systems have existed before the internet such as GRASS GIS or MOSS, or with later vendors such as ESRI.

One of the major evolution was in the 1990’s the US Census was able to create a geospatial database via street geocoding to create TIGER, a format used by the US census to describe areas within a US census tracts.

With the 2000’s the possibility of reverse geocoding became possible, or turning a a set of latitude & longitude points into an address, and user geocoding platforms rising such as Google Maps of MapQuest.

Accuracy depends on what is the state of the datasets that contain in this information. While for TIGER 5-7.5% of addresses may be in different census tracts, in Australia that number is as high as 50%.

Geocoding can be used for a variety of purposes from tagging photos on social media, or to improve data analysis by being able to do more complicated forms of geospatial analysis as the data is in a form legible to GIS systems.

How can you do it?

There are several ways one can geocode a set of addresses. In R, one can use the ggmap package if one has a API key for Google Maps. There is the Geocoder package as well, but one can also send a request to the google maps API with the address in the parameters. The US Census also provides a geocoding REST API.

If one wants to do it by hand, Texas A&M provides an online geocoding service if you do not feel comfortable or do not know how to program. UCLA also provides this service as well, and you can upload CSV files to the site to geocode for you.

A case study

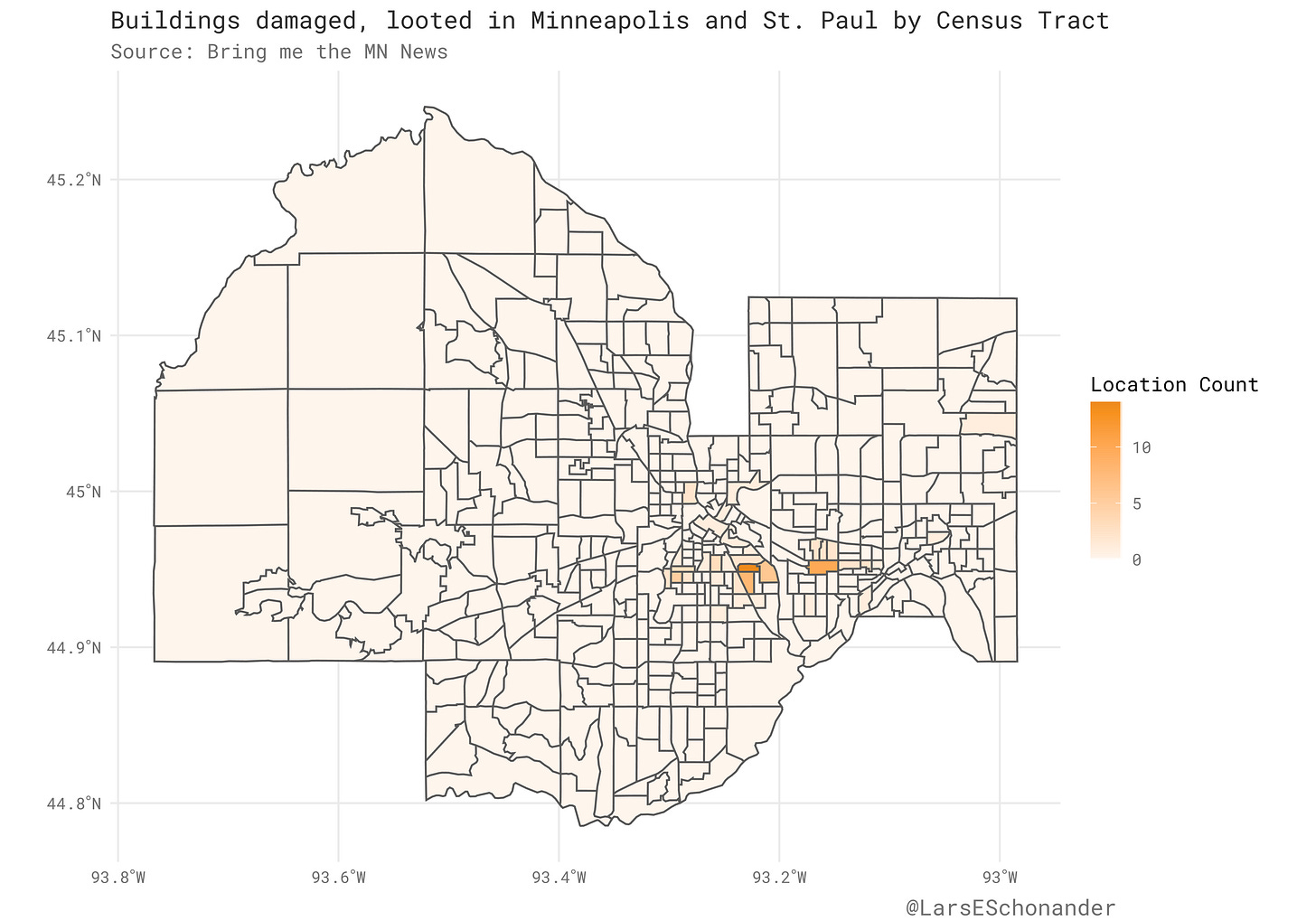

Back at the end of May, Bring the MN News published a article that listed what places suffered from damage such as looting or fire, but more importantly, published the address. First, the addresses needed to be cleaned, and the address and the actual damage split into two different columns. Through regex this was possible. However, once the city was added (Minneapolis or Saint Paul), geocoding was required to get the actual coordinates.

Via the ggmap package in R it is very easy to geocode a set of address. First, the address and city columns where concatenated together to create a proper address. Then, with the geocode function, a latitude and longitude column where created, making it possible to plot points on the map of Minneapolis and Saint Paul.

damage_data_updated %>% mutate( Address = str_c(Street, City, sep = ", "), Coords = map(Address, ~ geocode(.x, output = c("latlon"), .null = NA)) ) %>% unnest(Coords) -> damage_data_updated

Combined with census tracts for Minneapolis and Saint Paul, it is now possible to for example, plot what Census Tract’s had more damaged addresses.

Now, some links…

Yak Collective: The New Old Home

Rediscovering the home as a production frontier

The Yak Collective’s second report, The New Old Home, offers 22 perspectives built around Pamela Hobart’s central thesis: as work returns to the home in the form of remote work opportunities (a trend now dramatically accelerated by pandemic circumstances), we can turn to historical modes of integrated living, reconsidered in light of newer technology, to guide our attempts at co-located life and work.

Many of our contributors are balancing the needs of our children, our parents and grandparents, our partners, and ourselves as we adapt to this unprecedented situation. We offer our ideas freely in the hope that they might help us to design a better future for our homes and families.

Eudaimonium: A Listian Perspective: Old Ideas for New Days

Friedrich List is a borderline paradoxical figure at first sight, seemingly in contradiction with himself: he pioneered a customers union within a fractured Germany, but also campaigned in favor of protectionist policies later on; he both lambasts Smith and Say and the simplicity of the arguments for free trade, and yet repeatedly implies that “one day” there will be free trade; he yearned for a united Germany, but simultaneously rejected outright jingoism. But Its in his nuance and synthesis that List sketches perspectives that we seem to have neglected.

Vici Boykis: Getting Machine Learning to Production

Much like the steam-powered tricycle, each machine learning project is beautiful and weird and inefficient in its own way. Every company has its unique set of needs, stemming from the fact that each company’s data and business logic run differently. In a recent Hacker News threads on how models are deployed in production, there were over 130 different answers, ranging from using scikit-learn, to Tensorflow, to pickling objects, to serializing them, to AWS Lambdas, to Kubernetes, to even C#.

This lack of standardization, and more importantly, lack of stories about standardization, leads to a lot of reinventing the wheel and gluing things together, across the industry.

The Economist: Forecasting the US Elections

This year, The Economist is publishing its first-ever statistical forecast of an American presidential election. Developed with the assistance of Andrew Gelman and Merlin Heidemanns, political scientists at Columbia University, our model calculates Joe Biden’s and Donald Trump’s probabilities of winning each individual state and the election overall. Its projections will be updated every day at https://projects.economist.com/us-2020-forecast/president.

In another first, we are publishing the source code for what we believe to be the most innovative section of the model. All readers are welcome to download it, explore how it works, tweak its parameters and run it themselves. But for people who don’t feel like wading through a script in the R and Stan programming languages, we have summarised our methodology below.

Loose Threads: Drive Customer Loyalty With Physical Retail Post-COVID-19

In order to keep customers and drive loyalty, each and every person must feel as though they are receiving elements of a high-touch service. At a minimum, the customer needs to feel that measures for safety exceed their expectations. Communications between the brand, employees and the customer must be exceptionally clear and thoughtful. “New” services such as curbside pickup and buy-online, pick-up-in-store (BOPIS) offer additional interactions for White Glove Service. Brands and retailers must adopt these practices in order to meet baseline expectations, given the restrictions imposed on retail under COVID-19.

Miscellany:

I apologize for not publishing in the last few weeks. Life got a little hectic. I promise to get back on track.

I found an amazing book on the history of the Ottoman Empire called Osman’s Dream. In general the history of the Near East / Central Asia is something I always been fascinated about. It was sparked by reading the book Lost Enlightenment, which covered the golden age of Central Asia where figures such as Al-Khwarizmi created innovations in mathematics.

Thanks!

Thanks for taking the time to read this, I will be back next soon! In the meantime, you can follow me on Twitter or reach out via email.

FYI, another cool tool is CSV2GEO at https://csv2geo.com . The tool support both straight and reverse geocoding with amazing accuracy/ relevance and covers worldwide.